在 TensorFlow.org 上檢視 在 TensorFlow.org 上檢視

|

在 Google Colab 中執行 在 Google Colab 中執行

|

在 GitHub 上檢視原始碼 在 GitHub 上檢視原始碼

|

下載筆記本 下載筆記本

|

本教學課程透過三個範例介紹自動編碼器:基礎知識、圖片去噪和異常偵測。

自動編碼器是一種特殊的神經網路類型,經過訓練可將輸入複製到輸出。例如,給定手寫數字的圖片,自動編碼器首先將圖片編碼為較低維度的潛在表示法,然後將潛在表示法解碼回圖片。自動編碼器學習壓縮資料,同時最大限度地減少重建誤差。

若要進一步瞭解自動編碼器,請考慮閱讀 Ian Goodfellow、Yoshua Bengio 和 Aaron Courville 合著的《Deep Learning》第 14 章。

匯入 TensorFlow 和其他函式庫

import matplotlib.pyplot as plt

import numpy as np

import pandas as pd

import tensorflow as tf

from sklearn.metrics import accuracy_score, precision_score, recall_score

from sklearn.model_selection import train_test_split

from tensorflow.keras import layers, losses

from tensorflow.keras.datasets import fashion_mnist

from tensorflow.keras.models import Model

載入資料集

首先,您將使用 Fashion MNIST 資料集訓練基本自動編碼器。此資料集中的每張圖片都是 28x28 像素。

(x_train, _), (x_test, _) = fashion_mnist.load_data()

x_train = x_train.astype('float32') / 255.

x_test = x_test.astype('float32') / 255.

print (x_train.shape)

print (x_test.shape)

Downloading data from https://storage.googleapis.com/tensorflow/tf-keras-datasets/train-labels-idx1-ubyte.gz 29515/29515 ━━━━━━━━━━━━━━━━━━━━ 0s 0us/step Downloading data from https://storage.googleapis.com/tensorflow/tf-keras-datasets/train-images-idx3-ubyte.gz 26421880/26421880 ━━━━━━━━━━━━━━━━━━━━ 0s 0us/step Downloading data from https://storage.googleapis.com/tensorflow/tf-keras-datasets/t10k-labels-idx1-ubyte.gz 5148/5148 ━━━━━━━━━━━━━━━━━━━━ 0s 0us/step Downloading data from https://storage.googleapis.com/tensorflow/tf-keras-datasets/t10k-images-idx3-ubyte.gz 4422102/4422102 ━━━━━━━━━━━━━━━━━━━━ 0s 0us/step (60000, 28, 28) (10000, 28, 28)

第一個範例:基本自動編碼器

定義具有兩個密集層的自動編碼器:encoder,將圖片壓縮為 64 維潛在向量;以及 decoder,從潛在空間重建原始圖片。

若要定義模型,請使用 Keras 模型子類別化 API。

class Autoencoder(Model):

def __init__(self, latent_dim, shape):

super(Autoencoder, self).__init__()

self.latent_dim = latent_dim

self.shape = shape

self.encoder = tf.keras.Sequential([

layers.Flatten(),

layers.Dense(latent_dim, activation='relu'),

])

self.decoder = tf.keras.Sequential([

layers.Dense(tf.math.reduce_prod(shape).numpy(), activation='sigmoid'),

layers.Reshape(shape)

])

def call(self, x):

encoded = self.encoder(x)

decoded = self.decoder(encoded)

return decoded

shape = x_test.shape[1:]

latent_dim = 64

autoencoder = Autoencoder(latent_dim, shape)

autoencoder.compile(optimizer='adam', loss=losses.MeanSquaredError())

使用 x_train 作為輸入和目標來訓練模型。encoder 將學習將資料集從 784 維壓縮到潛在空間,而 decoder 將學習重建原始圖片。 .

autoencoder.fit(x_train, x_train,

epochs=10,

shuffle=True,

validation_data=(x_test, x_test))

Epoch 1/10 WARNING: All log messages before absl::InitializeLog() is called are written to STDERR I0000 00:00:1713230807.172583 14227 service.cc:145] XLA service 0x7fe4cc007e20 initialized for platform CUDA (this does not guarantee that XLA will be used). Devices: I0000 00:00:1713230807.172638 14227 service.cc:153] StreamExecutor device (0): Tesla T4, Compute Capability 7.5 I0000 00:00:1713230807.172643 14227 service.cc:153] StreamExecutor device (1): Tesla T4, Compute Capability 7.5 I0000 00:00:1713230807.172646 14227 service.cc:153] StreamExecutor device (2): Tesla T4, Compute Capability 7.5 I0000 00:00:1713230807.172648 14227 service.cc:153] StreamExecutor device (3): Tesla T4, Compute Capability 7.5 131/1875 ━━━━━━━━━━━━━━━━━━━━ 2s 1ms/step - loss: 0.1034 I0000 00:00:1713230807.858362 14227 device_compiler.h:188] Compiled cluster using XLA! This line is logged at most once for the lifetime of the process. 1875/1875 ━━━━━━━━━━━━━━━━━━━━ 5s 2ms/step - loss: 0.0397 - val_loss: 0.0134 Epoch 2/10 1875/1875 ━━━━━━━━━━━━━━━━━━━━ 2s 1ms/step - loss: 0.0124 - val_loss: 0.0111 Epoch 3/10 1875/1875 ━━━━━━━━━━━━━━━━━━━━ 3s 1ms/step - loss: 0.0102 - val_loss: 0.0097 Epoch 4/10 1875/1875 ━━━━━━━━━━━━━━━━━━━━ 2s 1ms/step - loss: 0.0095 - val_loss: 0.0094 Epoch 5/10 1875/1875 ━━━━━━━━━━━━━━━━━━━━ 2s 1ms/step - loss: 0.0092 - val_loss: 0.0092 Epoch 6/10 1875/1875 ━━━━━━━━━━━━━━━━━━━━ 3s 1ms/step - loss: 0.0090 - val_loss: 0.0090 Epoch 7/10 1875/1875 ━━━━━━━━━━━━━━━━━━━━ 2s 1ms/step - loss: 0.0089 - val_loss: 0.0089 Epoch 8/10 1875/1875 ━━━━━━━━━━━━━━━━━━━━ 2s 1ms/step - loss: 0.0088 - val_loss: 0.0090 Epoch 9/10 1875/1875 ━━━━━━━━━━━━━━━━━━━━ 2s 1ms/step - loss: 0.0087 - val_loss: 0.0089 Epoch 10/10 1875/1875 ━━━━━━━━━━━━━━━━━━━━ 2s 1ms/step - loss: 0.0087 - val_loss: 0.0088 <keras.src.callbacks.history.History at 0x7fe6854d9b50>

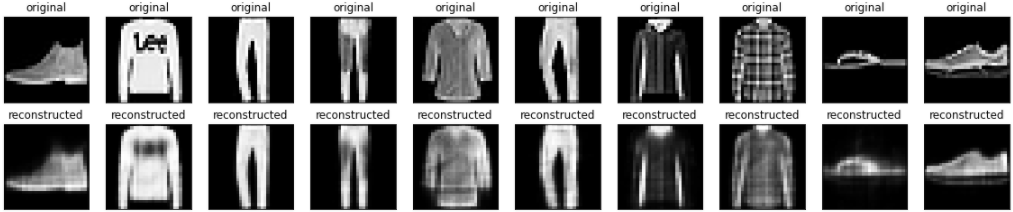

現在模型已訓練完成,讓我們透過編碼和解碼測試集中的圖片來測試它。

encoded_imgs = autoencoder.encoder(x_test).numpy()

decoded_imgs = autoencoder.decoder(encoded_imgs).numpy()

n = 10

plt.figure(figsize=(20, 4))

for i in range(n):

# display original

ax = plt.subplot(2, n, i + 1)

plt.imshow(x_test[i])

plt.title("original")

plt.gray()

ax.get_xaxis().set_visible(False)

ax.get_yaxis().set_visible(False)

# display reconstruction

ax = plt.subplot(2, n, i + 1 + n)

plt.imshow(decoded_imgs[i])

plt.title("reconstructed")

plt.gray()

ax.get_xaxis().set_visible(False)

ax.get_yaxis().set_visible(False)

plt.show()

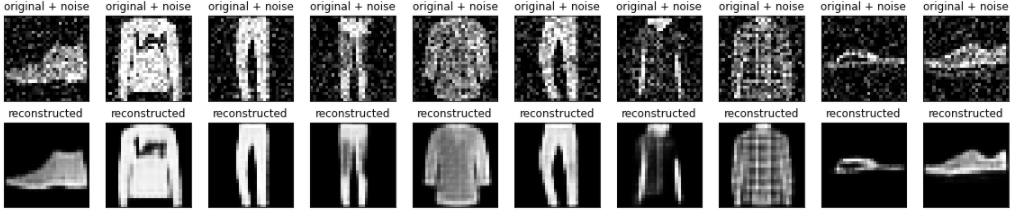

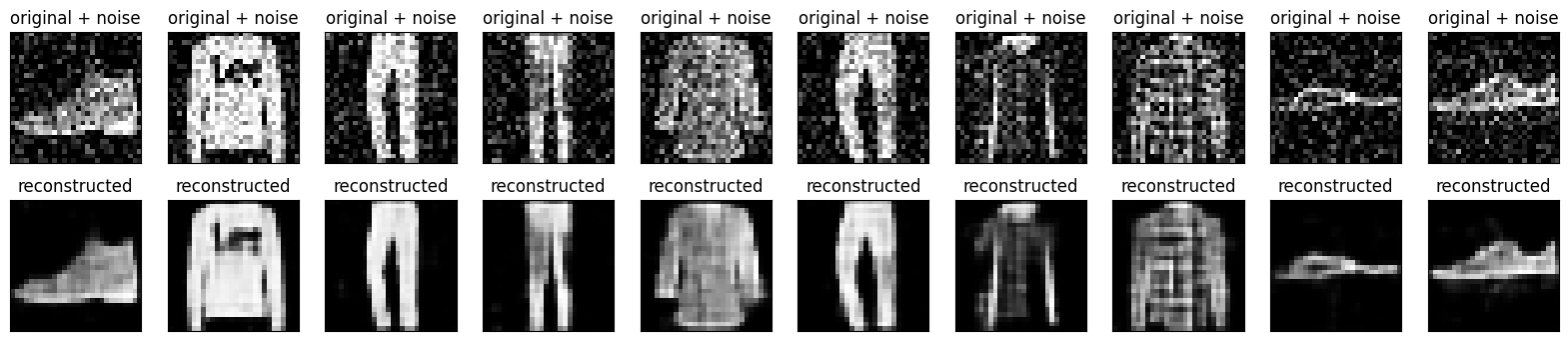

第二個範例:圖片去噪

自動編碼器也可以訓練為從圖片中移除雜訊。在以下章節中,您將透過對每張圖片套用隨機雜訊來建立 Fashion MNIST 資料集的雜訊版本。然後,您將使用雜訊圖片作為輸入來訓練自動編碼器,並使用原始圖片作為目標。

讓我們重新匯入資料集以省略先前所做的修改。

(x_train, _), (x_test, _) = fashion_mnist.load_data()

x_train = x_train.astype('float32') / 255.

x_test = x_test.astype('float32') / 255.

x_train = x_train[..., tf.newaxis]

x_test = x_test[..., tf.newaxis]

print(x_train.shape)

(60000, 28, 28, 1)

將隨機雜訊新增至圖片

noise_factor = 0.2

x_train_noisy = x_train + noise_factor * tf.random.normal(shape=x_train.shape)

x_test_noisy = x_test + noise_factor * tf.random.normal(shape=x_test.shape)

x_train_noisy = tf.clip_by_value(x_train_noisy, clip_value_min=0., clip_value_max=1.)

x_test_noisy = tf.clip_by_value(x_test_noisy, clip_value_min=0., clip_value_max=1.)

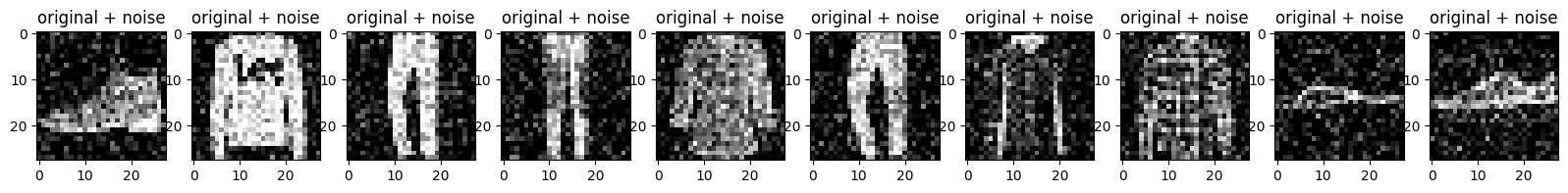

繪製雜訊圖片。

n = 10

plt.figure(figsize=(20, 2))

for i in range(n):

ax = plt.subplot(1, n, i + 1)

plt.title("original + noise")

plt.imshow(tf.squeeze(x_test_noisy[i]))

plt.gray()

plt.show()

定義卷積自動編碼器

在此範例中,您將使用編碼器中的 Conv2D 層和解碼器中的 Conv2DTranspose 層來訓練卷積自動編碼器。

class Denoise(Model):

def __init__(self):

super(Denoise, self).__init__()

self.encoder = tf.keras.Sequential([

layers.Input(shape=(28, 28, 1)),

layers.Conv2D(16, (3, 3), activation='relu', padding='same', strides=2),

layers.Conv2D(8, (3, 3), activation='relu', padding='same', strides=2)])

self.decoder = tf.keras.Sequential([

layers.Conv2DTranspose(8, kernel_size=3, strides=2, activation='relu', padding='same'),

layers.Conv2DTranspose(16, kernel_size=3, strides=2, activation='relu', padding='same'),

layers.Conv2D(1, kernel_size=(3, 3), activation='sigmoid', padding='same')])

def call(self, x):

encoded = self.encoder(x)

decoded = self.decoder(encoded)

return decoded

autoencoder = Denoise()

autoencoder.compile(optimizer='adam', loss=losses.MeanSquaredError())

autoencoder.fit(x_train_noisy, x_train,

epochs=10,

shuffle=True,

validation_data=(x_test_noisy, x_test))

Epoch 1/10 1875/1875 ━━━━━━━━━━━━━━━━━━━━ 7s 2ms/step - loss: 0.0332 - val_loss: 0.0094 Epoch 2/10 1875/1875 ━━━━━━━━━━━━━━━━━━━━ 3s 2ms/step - loss: 0.0089 - val_loss: 0.0081 Epoch 3/10 1875/1875 ━━━━━━━━━━━━━━━━━━━━ 4s 2ms/step - loss: 0.0079 - val_loss: 0.0076 Epoch 4/10 1875/1875 ━━━━━━━━━━━━━━━━━━━━ 4s 2ms/step - loss: 0.0074 - val_loss: 0.0073 Epoch 5/10 1875/1875 ━━━━━━━━━━━━━━━━━━━━ 4s 2ms/step - loss: 0.0072 - val_loss: 0.0071 Epoch 6/10 1875/1875 ━━━━━━━━━━━━━━━━━━━━ 4s 2ms/step - loss: 0.0070 - val_loss: 0.0070 Epoch 7/10 1875/1875 ━━━━━━━━━━━━━━━━━━━━ 4s 2ms/step - loss: 0.0069 - val_loss: 0.0069 Epoch 8/10 1875/1875 ━━━━━━━━━━━━━━━━━━━━ 4s 2ms/step - loss: 0.0068 - val_loss: 0.0068 Epoch 9/10 1875/1875 ━━━━━━━━━━━━━━━━━━━━ 4s 2ms/step - loss: 0.0068 - val_loss: 0.0067 Epoch 10/10 1875/1875 ━━━━━━━━━━━━━━━━━━━━ 3s 2ms/step - loss: 0.0067 - val_loss: 0.0067 <keras.src.callbacks.history.History at 0x7fe6883e5a30>

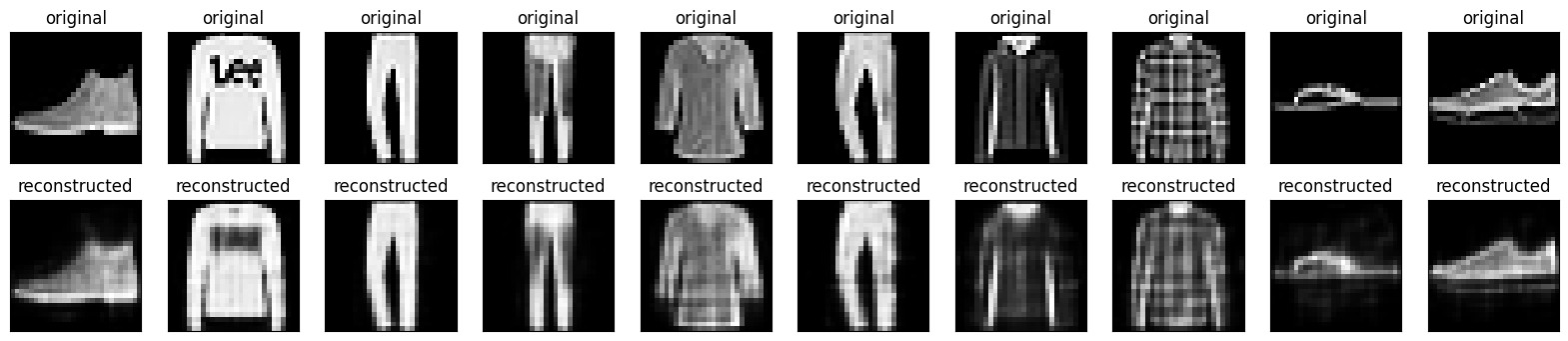

讓我們看一下編碼器的摘要。請注意圖片如何從 28x28 降取樣至 7x7。

autoencoder.encoder.summary()

解碼器將圖片從 7x7 向上取樣回 28x28。

autoencoder.decoder.summary()

繪製雜訊圖片和自動編碼器產生的去噪圖片。

encoded_imgs = autoencoder.encoder(x_test_noisy).numpy()

decoded_imgs = autoencoder.decoder(encoded_imgs).numpy()

n = 10

plt.figure(figsize=(20, 4))

for i in range(n):

# display original + noise

ax = plt.subplot(2, n, i + 1)

plt.title("original + noise")

plt.imshow(tf.squeeze(x_test_noisy[i]))

plt.gray()

ax.get_xaxis().set_visible(False)

ax.get_yaxis().set_visible(False)

# display reconstruction

bx = plt.subplot(2, n, i + n + 1)

plt.title("reconstructed")

plt.imshow(tf.squeeze(decoded_imgs[i]))

plt.gray()

bx.get_xaxis().set_visible(False)

bx.get_yaxis().set_visible(False)

plt.show()

第三個範例:異常偵測

總覽

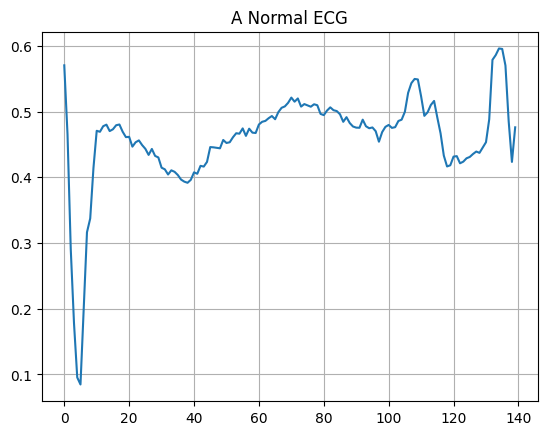

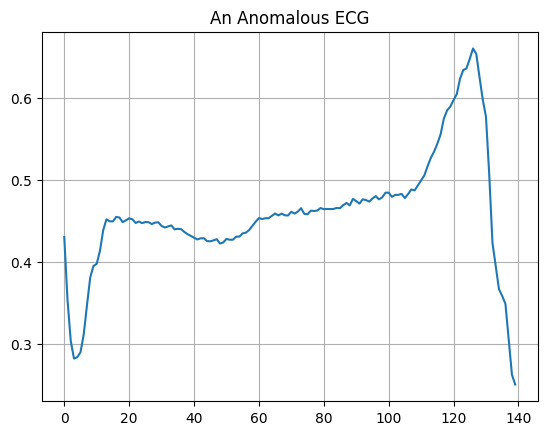

在此範例中,您將訓練自動編碼器以偵測 ECG5000 資料集中的異常。此資料集包含 5,000 張心電圖,每張心電圖有 140 個資料點。您將使用資料集的簡化版本,其中每個範例都標記為 0 (對應於異常節律) 或 1 (對應於正常節律)。您有興趣識別異常節律。

您將如何使用自動編碼器偵測異常?回想一下,自動編碼器經過訓練以最大限度地減少重建誤差。您將僅針對正常節律訓練自動編碼器,然後使用它重建所有資料。我們的假設是異常節律將具有更高的重建誤差。然後,如果重建誤差超過固定閾值,您將節律分類為異常。

載入 ECG 資料

您將使用的資料集基於 timeseriesclassification.com 中的資料集。

# Download the dataset

dataframe = pd.read_csv('http://storage.googleapis.com/download.tensorflow.org/data/ecg.csv', header=None)

raw_data = dataframe.values

dataframe.head()

# The last element contains the labels

labels = raw_data[:, -1]

# The other data points are the electrocadriogram data

data = raw_data[:, 0:-1]

train_data, test_data, train_labels, test_labels = train_test_split(

data, labels, test_size=0.2, random_state=21

)

將資料正規化為 [0,1]。

min_val = tf.reduce_min(train_data)

max_val = tf.reduce_max(train_data)

train_data = (train_data - min_val) / (max_val - min_val)

test_data = (test_data - min_val) / (max_val - min_val)

train_data = tf.cast(train_data, tf.float32)

test_data = tf.cast(test_data, tf.float32)

您將僅使用正常節律來訓練自動編碼器,這些節律在此資料集中標記為 1。將正常節律與異常節律分開。

train_labels = train_labels.astype(bool)

test_labels = test_labels.astype(bool)

normal_train_data = train_data[train_labels]

normal_test_data = test_data[test_labels]

anomalous_train_data = train_data[~train_labels]

anomalous_test_data = test_data[~test_labels]

繪製正常心電圖。

plt.grid()

plt.plot(np.arange(140), normal_train_data[0])

plt.title("A Normal ECG")

plt.show()

繪製異常心電圖。

plt.grid()

plt.plot(np.arange(140), anomalous_train_data[0])

plt.title("An Anomalous ECG")

plt.show()

建構模型

class AnomalyDetector(Model):

def __init__(self):

super(AnomalyDetector, self).__init__()

self.encoder = tf.keras.Sequential([

layers.Dense(32, activation="relu"),

layers.Dense(16, activation="relu"),

layers.Dense(8, activation="relu")])

self.decoder = tf.keras.Sequential([

layers.Dense(16, activation="relu"),

layers.Dense(32, activation="relu"),

layers.Dense(140, activation="sigmoid")])

def call(self, x):

encoded = self.encoder(x)

decoded = self.decoder(encoded)

return decoded

autoencoder = AnomalyDetector()

autoencoder.compile(optimizer='adam', loss='mae')

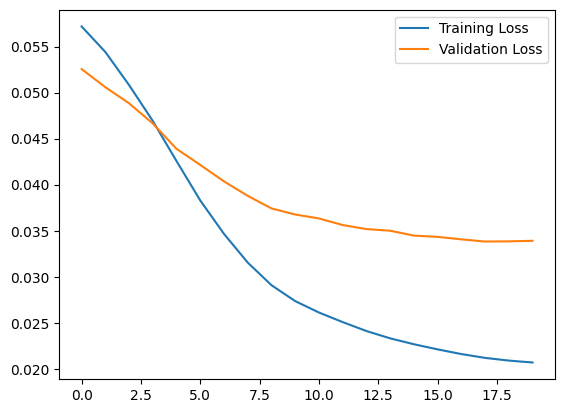

請注意,自動編碼器僅使用正常心電圖進行訓練,但使用完整的測試集進行評估。

history = autoencoder.fit(normal_train_data, normal_train_data,

epochs=20,

batch_size=512,

validation_data=(test_data, test_data),

shuffle=True)

Epoch 1/20 5/5 ━━━━━━━━━━━━━━━━━━━━ 5s 494ms/step - loss: 0.0575 - val_loss: 0.0526 Epoch 2/20 5/5 ━━━━━━━━━━━━━━━━━━━━ 0s 14ms/step - loss: 0.0549 - val_loss: 0.0506 Epoch 3/20 5/5 ━━━━━━━━━━━━━━━━━━━━ 0s 13ms/step - loss: 0.0514 - val_loss: 0.0488 Epoch 4/20 5/5 ━━━━━━━━━━━━━━━━━━━━ 0s 13ms/step - loss: 0.0475 - val_loss: 0.0466 Epoch 5/20 5/5 ━━━━━━━━━━━━━━━━━━━━ 0s 14ms/step - loss: 0.0432 - val_loss: 0.0439 Epoch 6/20 5/5 ━━━━━━━━━━━━━━━━━━━━ 0s 13ms/step - loss: 0.0389 - val_loss: 0.0421 Epoch 7/20 5/5 ━━━━━━━━━━━━━━━━━━━━ 0s 13ms/step - loss: 0.0353 - val_loss: 0.0404 Epoch 8/20 5/5 ━━━━━━━━━━━━━━━━━━━━ 0s 13ms/step - loss: 0.0319 - val_loss: 0.0388 Epoch 9/20 5/5 ━━━━━━━━━━━━━━━━━━━━ 0s 14ms/step - loss: 0.0290 - val_loss: 0.0374 Epoch 10/20 5/5 ━━━━━━━━━━━━━━━━━━━━ 0s 13ms/step - loss: 0.0276 - val_loss: 0.0368 Epoch 11/20 5/5 ━━━━━━━━━━━━━━━━━━━━ 0s 14ms/step - loss: 0.0264 - val_loss: 0.0363 Epoch 12/20 5/5 ━━━━━━━━━━━━━━━━━━━━ 0s 14ms/step - loss: 0.0254 - val_loss: 0.0356 Epoch 13/20 5/5 ━━━━━━━━━━━━━━━━━━━━ 0s 13ms/step - loss: 0.0242 - val_loss: 0.0352 Epoch 14/20 5/5 ━━━━━━━━━━━━━━━━━━━━ 0s 14ms/step - loss: 0.0234 - val_loss: 0.0350 Epoch 15/20 5/5 ━━━━━━━━━━━━━━━━━━━━ 0s 14ms/step - loss: 0.0229 - val_loss: 0.0345 Epoch 16/20 5/5 ━━━━━━━━━━━━━━━━━━━━ 0s 14ms/step - loss: 0.0222 - val_loss: 0.0343 Epoch 17/20 5/5 ━━━━━━━━━━━━━━━━━━━━ 0s 13ms/step - loss: 0.0216 - val_loss: 0.0341 Epoch 18/20 5/5 ━━━━━━━━━━━━━━━━━━━━ 0s 14ms/step - loss: 0.0212 - val_loss: 0.0338 Epoch 19/20 5/5 ━━━━━━━━━━━━━━━━━━━━ 0s 14ms/step - loss: 0.0210 - val_loss: 0.0338 Epoch 20/20 5/5 ━━━━━━━━━━━━━━━━━━━━ 0s 14ms/step - loss: 0.0208 - val_loss: 0.0339

plt.plot(history.history["loss"], label="Training Loss")

plt.plot(history.history["val_loss"], label="Validation Loss")

plt.legend()

<matplotlib.legend.Legend at 0x7fe6704c3520>

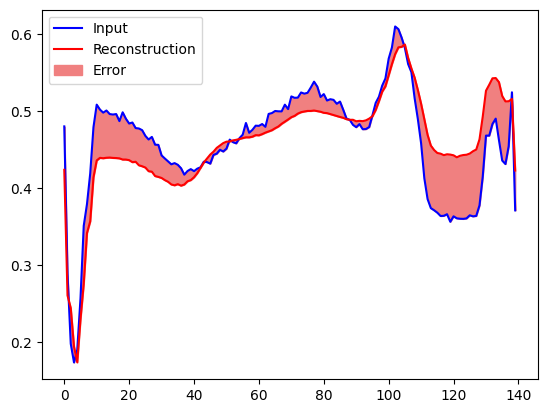

如果重建誤差大於正常訓練範例的一個標準差,您很快就會將心電圖分類為異常。首先,讓我們繪製訓練集中的正常心電圖、自動編碼器編碼和解碼後的重建,以及重建誤差。

encoded_data = autoencoder.encoder(normal_test_data).numpy()

decoded_data = autoencoder.decoder(encoded_data).numpy()

plt.plot(normal_test_data[0], 'b')

plt.plot(decoded_data[0], 'r')

plt.fill_between(np.arange(140), decoded_data[0], normal_test_data[0], color='lightcoral')

plt.legend(labels=["Input", "Reconstruction", "Error"])

plt.show()

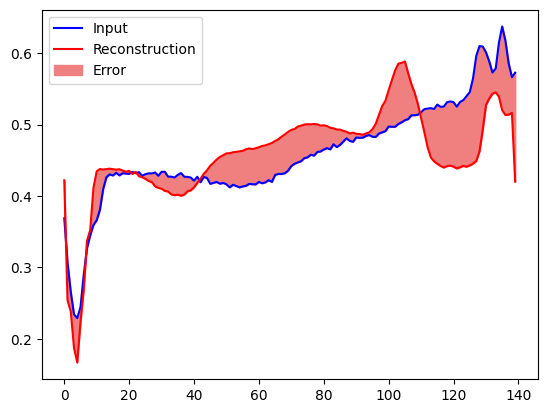

建立類似的圖表,這次針對異常測試範例。

encoded_data = autoencoder.encoder(anomalous_test_data).numpy()

decoded_data = autoencoder.decoder(encoded_data).numpy()

plt.plot(anomalous_test_data[0], 'b')

plt.plot(decoded_data[0], 'r')

plt.fill_between(np.arange(140), decoded_data[0], anomalous_test_data[0], color='lightcoral')

plt.legend(labels=["Input", "Reconstruction", "Error"])

plt.show()

偵測異常

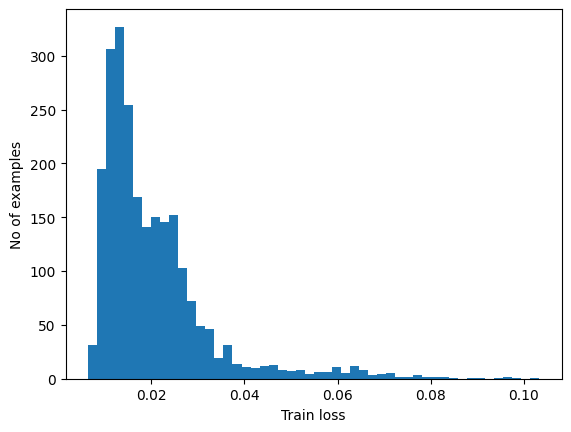

透過計算重建損失是否大於固定閾值來偵測異常。在本教學課程中,您將計算訓練集中正常範例的平均絕對誤差,然後如果重建誤差高於訓練集的一個標準差,則將未來的範例分類為異常。

繪製訓練集中正常心電圖的重建誤差

reconstructions = autoencoder.predict(normal_train_data)

train_loss = tf.keras.losses.mae(reconstructions, normal_train_data)

plt.hist(train_loss[None,:], bins=50)

plt.xlabel("Train loss")

plt.ylabel("No of examples")

plt.show()

74/74 ━━━━━━━━━━━━━━━━━━━━ 1s 6ms/step

選擇一個高於平均值一個標準差的閾值。

threshold = np.mean(train_loss) + np.std(train_loss)

print("Threshold: ", threshold)

Threshold: 0.033047937

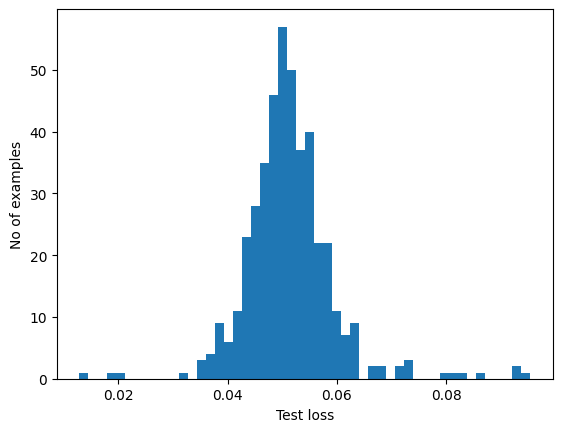

如果您檢查測試集中異常範例的重建誤差,您會注意到大多數的重建誤差都大於閾值。透過改變閾值,您可以調整分類器的精確度和召回率。

reconstructions = autoencoder.predict(anomalous_test_data)

test_loss = tf.keras.losses.mae(reconstructions, anomalous_test_data)

plt.hist(test_loss[None, :], bins=50)

plt.xlabel("Test loss")

plt.ylabel("No of examples")

plt.show()

14/14 ━━━━━━━━━━━━━━━━━━━━ 0s 22ms/step

如果重建誤差大於閾值,則將心電圖分類為異常。

def predict(model, data, threshold):

reconstructions = model(data)

loss = tf.keras.losses.mae(reconstructions, data)

return tf.math.less(loss, threshold)

def print_stats(predictions, labels):

print("Accuracy = {}".format(accuracy_score(labels, predictions)))

print("Precision = {}".format(precision_score(labels, predictions)))

print("Recall = {}".format(recall_score(labels, predictions)))

preds = predict(autoencoder, test_data, threshold)

print_stats(preds, test_labels)

Accuracy = 0.944 Precision = 0.9921875 Recall = 0.9071428571428571

後續步驟

若要進一步瞭解使用自動編碼器進行異常偵測,請查看 Victor Dibia 使用 TensorFlow.js 建置的絕佳互動式範例。如需真實世界的用例,您可以瞭解 Airbus 如何使用 TensorFlow 偵測國際太空站遙測資料中的異常。若要進一步瞭解基礎知識,請考慮閱讀 François Chollet 的這篇部落格文章。如需更多詳細資訊,請查看 Ian Goodfellow、Yoshua Bengio 和 Aaron Courville 合著的《Deep Learning》第 14 章。